From Arcade to Living Room: Offline Coding Models Hit Their Console Moment

TL;DR

If you lived through the 1980s and early 1990s arcade era, you remember the jump to home consoles: still behind the best cabinets, but suddenly available in a safer, cheaper, more comfortable place — your home. Think Street Fighter II: the arcade board had the edge in raw smoothness and fidelity, yet players still poured countless hours into the SNES and Genesis versions, because the experience was finally always within reach.

AI coding is in a similar moment. The best capability still lives behind a hosted model API, much as the best version of a game once lived in the arcade. But open-weight models that fit on a single workstation (27B–32B class) now reach Terminal-Bench 2.0 pass rates close to what state-of-the-art frontier systems were posting roughly 6–8 months ago. They are not at parity with today's best hosted models. And for teams that cannot send code and data to external APIs — regulated environments, air-gapped deployments, customer-data-sensitive workflows, or offline laptops — the gap is finally small enough that "run it locally" is a serious engineering option rather than a thought experiment.

At Antigma Labs, we are building Ante Agent to preserve state-of-the-art agent capability while continuously improving the local-model experience. Ante now ships a streamlined local-model orchestration stack, including one-click inference-engine setup and local hosting workflows. Users can extend it with their own local models, while we maintain and test a curated, verified-model list for reliable defaults. The observations below are an early snapshot from that work, focused on models that can be hosted relatively comfortably on consumer GPUs.

1. What we measured

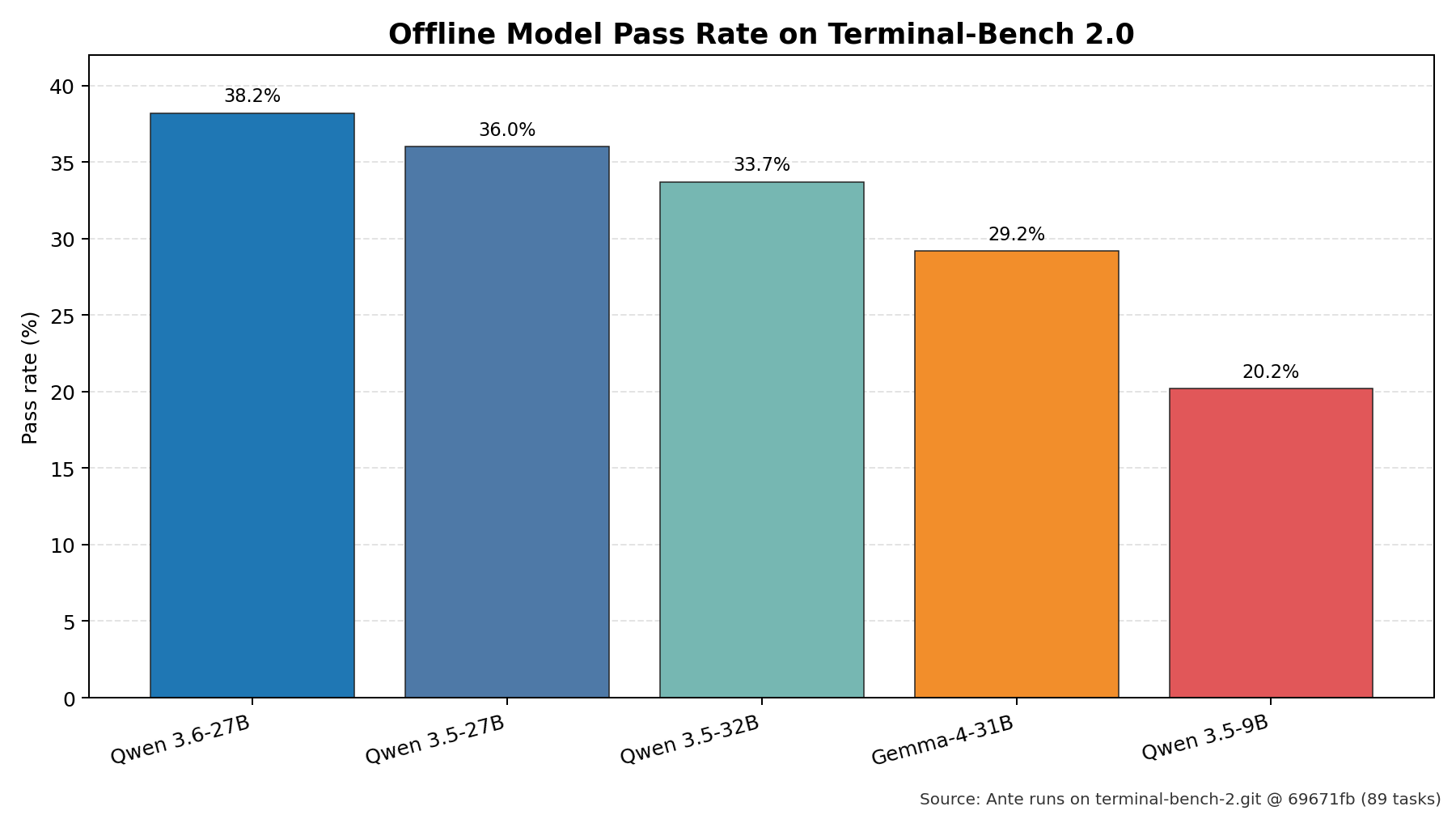

All runs below use the same Terminal-Bench 2.0 task suite (terminal-bench-2.git @ 69671fb, 89 tasks) driven by the ante agent harness. reward == 1.0 means the task passed its verifier; pass rate is passed / 89.

Tested offline models included Qwen 3.6-27B, Qwen 3.5-27B, Qwen 3.5-32B, Gemma-4-31B, GLM-4.6V-Flash, Qwen 3.5-9B, and Gemma-4-E4B-it (~4B). The 4B-class result is excluded from the main table because very small models can emit unstable or invalid tool calls, making outcomes hard to reproduce.

| Model (offline) | Harness | Pass rate on TB 2.0 |

|---|---|---|

| Qwen 3.6-27B | ante | 38.2 % (34/89) |

| Qwen 3.5-27B | ante | 36.0 % (32/89) |

| Qwen 3.5-32B | ante | 33.7 % (30/89) |

| Gemma-4-31B | ante | 29.2 % (26/89) |

| Qwen 3.5-9B | ante | 20.2 % (18/89) |

Visual snapshot (pass rate on Terminal-Bench 2.0):

The failure patterns are instructive: small (≤9B) models mostly fail on agentic behaviours — missing required tool-call fields, getting stuck in repeated WebFetch loops, producing a natural-language plan and then stopping without executing it. Even so, 20.2% is not nothing; it is already enough to make these models useful on a non-trivial slice of short, well-scoped tasks. The 27B–32B class clears those basic agentic hurdles, and its residual failures look more like frontier-model failure modes: time-outs on big builds, subtle requirement misreads, giving up after a single compilation error.

A note on timeout budgets. Every result in the table above uses the default Terminal-Bench 2.0 per-task timeout — the same budget the public leaderboard runs under — to keep the comparison apples-to-apples. This matters because a non-trivial share of the 27B-class failures above are time-outs on large builds, not correctness errors. For context, Qwen's own published evaluation of Qwen 3.6-27B on TB 2.0 uses a 3-hour timeout and lands at 59.3%. Our 38.2% is therefore a default-budget number, not a ceiling: given more wall-clock time, the same offline model gets materially further. We return to this in §4.

2. How local use feels in practice

The benchmark results above were produced separately; the hardware discussion in this section is only about local usability and interaction smoothness. To keep that experience close to what an individual developer might actually use, we ran the local-hosting tests on a machine with 64 GB of system RAM and an RTX 3060 12 GB. That is not a datacenter setup; it is a fairly ordinary prosumer desktop. For consistency, the throughput numbers below are all from Q4_K_M quantized GGUF variants.

The first prompt in an agent run is the worst case for latency: it can carry several thousand — sometimes well over ten thousand — input tokens before the model emits anything. In these logs, some first turns were in the ~15k-token range, and a few runs went past 25k prompt tokens. Even so, the overall interaction was usually smoother than those first-turn numbers suggest.

Approximate throughput from the local inference logs:

| Model | Prompt processing | Generation speed |

|---|---|---|

| Qwen 3.5-9B | ~1355 tokens/s | ~43.6 tokens/s |

| Qwen 3.6-27B | ~150 tokens/s | ~1.9 tokens/s |

| Qwen 3.6-27B (RTX 5090 32 GB) | ~1.7k–3.3k tokens/s | ~64–70 tokens/s |

| Qwen 3.6-35B-A3B | ~206 tokens/s | ~29.9 tokens/s |

| Gemma-4-26B-A4B | ~376 tokens/s | ~27.1 tokens/s |

The practical takeaway is more nuanced than a single average-latency number suggests. Because most of these models are larger than 12 GB of VRAM, they are effectively running with a hybrid RAM + VRAM placement rather than fitting cleanly into GPU memory. The logs show this directly: larger models spill substantial state into host memory, while only part of the weights or KV cache stays on the GPU.

In that hybrid setup, MoE and dense models behave very differently. The MoE models here — especially Qwen 3.6-35B-A3B and Gemma-4-26B-A4B — still generate at roughly 30 tokens/s, which feels responsive in real use with no obvious stutter. The dense Qwen 3.6-27B, by contrast, is much slower at generation time on this machine, closer to ~2 tokens/s; output feels noticeably draggy even though prompt ingestion is still reasonable.

That gap is not just hypothetical. On an RTX 5090 32 GB, the same Qwen 3.6-27B reaches roughly 64–70 tokens/s generation speed across several requests (for example 69.0, 70.4, 70.2, 67.1, 66.8, 66.5, and 64.1 tokens/s), with prompt processing typically around 1.7k–3.3k tokens/s. At that point the model is no longer merely "able to run"; it is in the range that feels smooth enough for normal interactive use, whether for local coding or agent-style work.

So the realistic conclusion is not that every local model felt equally smooth. Local use on a 64 GB RAM + RTX 3060 12 GB machine is already viable, but the experience depends heavily on model architecture and memory fit. On an RTX 5090 32 GB, even the dense Qwen 3.6-27B becomes fluent in practice rather than borderline usable.

3. Where 38.2% sits relative to the frontier

For the leaderboard comparison, we use the public Terminal-Bench 2.0 leaderboard (snapshot 2026-04-23) filtered to verified-only entries.

| Rank | Agent | Model | Accuracy | Date |

|---|---|---|---|---|

| 1 | Codex | GPT-5.5 | 82.0 % ± 2.2 | 2026-04-23 |

| 2 | Simple Codex | GPT-5.3-Codex | 75.1 % ± 2.4 | 2026-02-06 |

| 3 | Ante | Gemini 3 Pro | 69.4 % ± 2.1 | 2026-01-06 |

| 4 | Terminus 2 | GPT-5.3-Codex | 64.7 % ± 2.7 | 2026-02-05 |

| 5 | Terminus 2 | Claude Opus 4.6 | 62.9 % ± 2.7 | 2026-02-06 |

In absolute terms, the current verified SOTA on Terminal-Bench 2.0 sits near 80%, well ahead of any offline model we ran (38%). That gap is real, and for maximum-capability agentic coding, hosted frontier models remain the right tool.

The more practical question is: what time window of hosted-agent performance does 38.2% correspond to? We answer it by anchoring on model release time (not leaderboard launch time), then checking verified entries from agent families most readers track — Claude Code, Codex CLI, Terminus 2 — near the 38.2% line.

| Agent | Model | Model release date basis | Accuracy | Verified run date | Delta vs 38.2% |

|---|---|---|---|---|---|

| Terminus 2 | Claude Opus 4.1 | 2025-08-05 | 38.0 % ± 2.6 | 2025-10-31 | -0.2 pt |

| Terminus 2 | GPT-5.1-Codex | 2025-11-13 | 36.9 % ± 3.2 | 2025-11-17 | -1.3 pt |

| Claude Code | Claude Sonnet 4.5 | 2025-09-29 | 40.1 % ± 2.9 | 2025-11-04 | +1.9 pt |

| Codex CLI | GPT-5-Codex | 2025-09-15 | 44.3 % ± 2.7 | 2025-11-04 | +6.1 pt |

On this basis, 38.2% maps most cleanly to models released in roughly Aug–Nov 2025, with the closest cluster of verified runs in late Oct to mid Nov 2025. It sits almost on top of Terminus 2 + Opus 4.1 (38.0%), slightly below Claude Code + Sonnet 4.5 (40.1%), and below Codex CLI + GPT-5-Codex (44.3%).

The gap to today's frontier is better described as a ~6–8-month lag:

- Apr 2026 SOTA: ~80% (GPT-5.5, Opus 4.6, Gemini 3.1 Pro)

- Time-equivalent model-release window for ~38% quality: roughly Aug–Nov 2025

- Apr 2026 best offline: ~38% (Qwen 3.6-27B, ante)

The headline is consistent from either direction: today's best runnable-offline model is roughly 6–8 months behind today's frontier.

Visual map of that time lag:

4. Why "about 6–8 months behind" is enough for real deployments

For a lot of real work, "about 6–8 months behind the frontier" is not a deal-breaker — and this is the first time it has been true for agentic coding. If you remember what coding agents could already do 6–8 months ago, this capability band should not feel hypothetical: by late 2025, many teams were already relying on them for substantial real work. Concretely:

Visual summary of the tradeoff:

- Privacy-first environments. Legal, healthcare, finance, and defence deployments that cannot send code or data to a third-party API were previously forced to either accept a 0–15% pass-rate class of model or skip coding agents entirely. 38% on TB 2.0 — in the same band as Claude Code + Sonnet 4.5 in November 2025 — changes the risk calculus.

- Air-gapped or bandwidth-constrained environments. Field deployments, on-prem CI, edge and robotics workbenches, and research clusters without outbound internet can now run a credible agent loop locally.

- Cost / rate-limit sensitivity. Teams running thousands of agent trials (for evaluation, data generation, or unsupervised repo maintenance) can absorb a moderate capability hit in exchange for eliminating per-token cost and API rate limits.

- Relaxed time budgets. Overnight batch runs, offline repo maintenance, and research data-generation pipelines do not actually need the default Terminal-Bench per-task timeout. Under Qwen's own 3-hour-per-task evaluation, Qwen 3.6-27B reaches 59.3% on Terminal-Bench 2.0, compared to our 38.2% at the default budget — if you can afford to wait, the usable offline ceiling is noticeably higher than the headline number.

- Hybrid routing. Because the 27B-class failure modes look like frontier failure modes (time-outs, missed requirements) rather than "can't call a tool at all", these models are a plausible default tier in a router that escalates hard tasks to a hosted frontier model only when local confidence is low.

5. Caveats

- We compare against verified agents only on the public leaderboard. This is deliberate: Terminal-Bench is actively removing suspicious submissions, and verified entries give a cleaner baseline.

- The local numbers use the

anteharness, while the leaderboard numbers come from different harnesses (Claude Code, Mini-SWE-Agent, Goose, Terminus, ForgeCode, …). Harness effects on TB 2.0 are known to be worth several percentage points, so the comparison is order-of-magnitude, not head-to-head. - The gap below the 27B tier is still very large: Qwen 3.5-9B gets 20% and the 4B Gemma variant gets 0%. "Offline models work" in 2026 means "mid-sized open-weight models work". Sub-10B models are not yet in the same conversation for agentic coding.

- Benchmark ≠ production. TB 2.0 is a curated, verifier-scored suite; real repos introduce longer contexts, ambiguous specs, and human-in-the-loop interaction that can widen or narrow the gap.

6. Bottom line

If your constraint is maximum capability, the hosted frontier is still the right answer in April 2026. If your constraint is privacy, air-gapping, cost, or control, open-weight models in the 27B–32B class (Qwen 3.6-27B in particular, on our runs) now deliver roughly the Terminal-Bench 2.0 quality that the frontier was shipping in late 2025 — about 6–8 months earlier.

The arcade-to-console jump is itself a small echo of a much longer pattern. When I first entered university, one of my professors described an earlier era of computing: punch cards, manual decimal-to-binary conversion, and all the small mechanical steps that once felt unavoidable. At the time, that world sounded almost unbelievable. I suspect today's programming workflows will feel the same way to the next generation — they may look back with some surprise that we still hand-wrote BFS, or built apps and small games line by line, much as we now look back at punch cards and manual base conversion.

That is the deeper reason this moment matters: for the first time, offline coding models feel less like a curiosity and more like the beginning of a transition.